The Heinrich/Bird safety pyramid

Pioneering research has become a safety myth

Herbert W.

Heinrich was a pioneering occupational health and safety researcher,

whose 1931 publication Industrial Accident Prevention: A Scientific

Approach [Heinrich 1931]

was based on the analysis of workplace injuries and accident data

collected by his employer, a large insurance company. This work, which

continued for more than thirty years, identified causal factors of

industrial accidents including “unsafe acts of people” and “unsafe

mechanical or physical conditions”.Unfortunately, H. W. Heinrich’s books are out of print,

and quite difficult to find. Copyright on the 1931 edition of his book

Industrial Accident Prevention is due to expire in 2026 (28 + 67 years from

the date of publication, according to this author’s understanding of

article 304 of US copyright law). The 1941 edition of the book is available

online at archive.org, thanks to the Digital Library of India.

Heinrich also put forward the domino model of accident causation, a simple

linear accident model.

The work was pursued and disseminated in the 1970s by Frank E. Bird, who worked for the Insurance Company of North America. F. Bird analyzed more than 1.7 million accidents reported by 297 cooperating companies. These companies represented 21 different industrial groups, employing 1.7 million employees who worked over 3 billion hours during the exposure period analyzed.

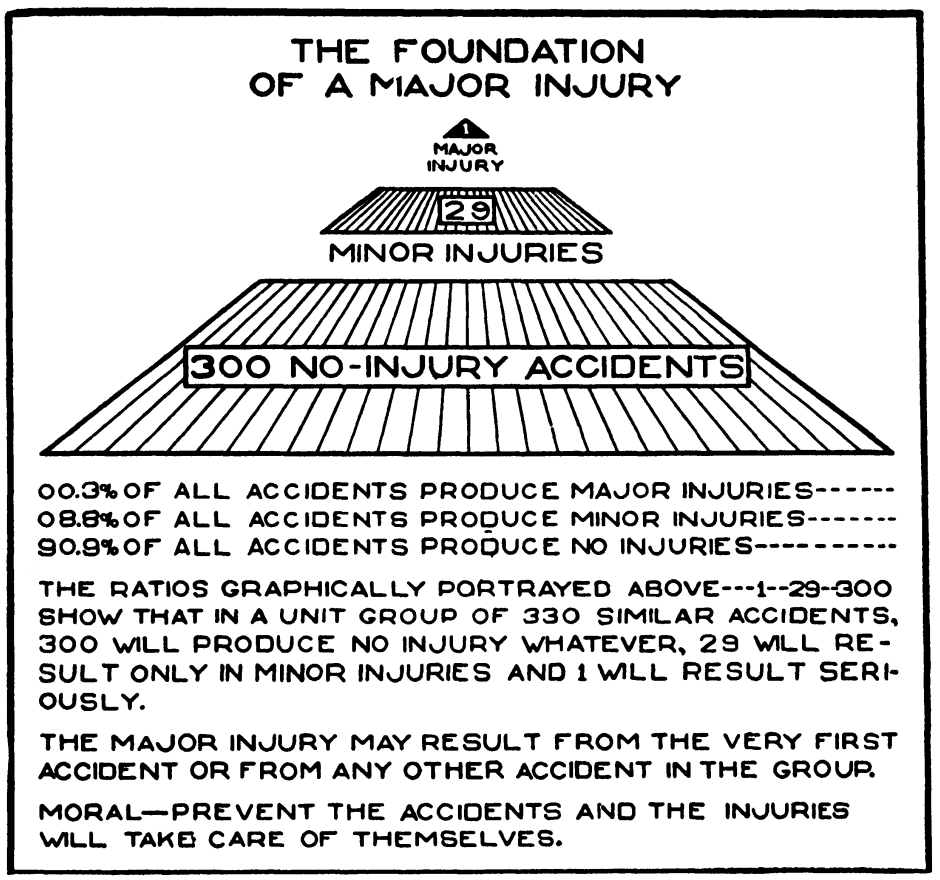

The most famous result is the incident/accident pyramid, also known as the “safety pyramid”, the “accident triangle” and “Heinrich’s law”. The pyramid, as illustrated by Heinrich in the 1941 edition of his book, is shown below.

F. Bird’s later work revealed the following ratios in the accidents reported to the insurance company:

For every reported major injury (resulting in a fatality, disability, lost time or medical treatment), there were 9.8 reported minor injuries (requiring only first aid). For the 95 companies that further analyzed major injuries in their reporting, the ratio was one lost time injury per 15 medical treatment injuries.

This work suggested that the ratio between fatal accidents, accidents, injuries and minor incidents (often reported as 1-10-30-600, and sometimes called “Heinrich’s Law” or the “Heinrich ratio”) is relatively constant, over time and across companies. Note that these numbers refer to accidents and occupational injuries that were reported to the insurance company and incidents discussed with the researchers, which may be rather different from the real number of accidents and incidents. Indeed, more recent research [Manuele 2002] suggests that these ratios are likely to be misleading:

It is impossible to conceive of incident data being gathered through the usual reporting methods in 1926 in which 10 out of 11 accidents could be no-injury cases.

— Heinrich Revisited: Truisms or Myths by Fred A. Manuele, 2002, ISBN 0-87912-245-5, US National Safety Council

Other empirical studies. What evidence is available concerning these issues in workplaces today?

Analysis of occupational accidents in the Netherlands [Bellamy et al. 2008] suggests that the “shape” of the incident/accident pyramid is dependent on the type of activity and risk type. A followup study found that within a specific hazard type, there is some proportional relationship (which for many hazard types is not linear) between low severity and higher severity accidents [Bellamy 2015].

Analysis of an industry-wide database of occupational accidents in Chile over a 28-month period [Marshall, Hirmas, and Singer 2018] finds that the ratio of fatal, serious and minor incidents varies depending on the base incident frequency, indicating that (even if the variation is small) the stable “Heinrich ratio” hypothesis is statistically invalid in this country and industry sector.

A study of incident data in US mines attempted to test the predictive validity of the incident/accident pyramid by checking whether the number of incidents at a mine predicted the number of fatalities at the mine the following year [Yorio and Moore 2018]. The authors conclude that there is “no significant difference in the probability of a subsequent year fatal event between mining establishment that had a total of 16 or less total lost/restricted days and those that had none” (though mines with larger numbers of lost days did have larger probabilities of subsequent fatalities) and later that “the safety triangle is not as obvious and straightforward as many assume it to be”. A later publication from the same group of authors [Moore et al. 2020] finds that the number of medium-severity incidents (such as permanently disabling injuries) in a mine is correlated with the number of fatal accidents in that mine the following year, but that there is no correlation between the number of minor incidents (reportable injuries, days lost to injury) and the number of fatalities in the next year.

This article is rather weakened by two points: (1) The authors conclude that “even without establishing that events with different severity levels have a common cause, near miss and lower severity events were demonstrated to be significant predictors of future fatalities. Therefore, efforts that reduce these near miss and lower severity events should be expected to reduce the probability of future fatalities regardless of whether a common cause is shared”. This is a logical fallacy; making this conclusion does indeed require the assumption of a common cause.This fallacy is called “post hoc ergo propter hoc” and underlies an argument used by tobacco companies during their long fight against regulation of cigarettes. To demonstrate that smoking causes lung cancer, it’s not sufficient to demonstrate that one predicts the other. There could be some underlying genetic disposition that causes both higher attraction to tobacco and increased probability of having cancer.

(2) The authors conclude that “tracking near misses and lesser severe safety incidents — and using them as a leading indicator within an OHS management system — is a valid practice” without any discussion of the complexities of selecting performance indicators for safety management, and in particular the biases such as under-reporting that they can introduce.

- Empirical studies in the medical area (emergency department attendance, medication errors) [Gallivan et al. 2008] find little evidence for a stable ratio between minor, intermediate and high severity events.

A counter-hypothesis: deviations as learning opportunities

Some studies even indicate that there can be, in certain sectors, a negative correlation between the number of recorded incidents and fatality rates (companies where more incidents are reported have fewer deaths). For example, a study of nonfatal accident/incident rates and passenger mortality risk in US airlines in the 1990s found a negative correlation between the number of non-fatal incidents and accidents recorded by airline companies and the probability of a passenger dying on one of their flights [Barnett and Wang 2000]. A study in the construction industry in Finland [Saloniemi and Oksanen 1998] found a negative correlation between the total number of recorded accidents and the number of fatal accidents. There are two possible explanations for these somewhat counterintuitive observations:

A low rate of recorded incidents and accidents may be caused by underreporting, where incidents are hidden by frontline staff or their managers instead of being reported. Multiple factors can contribute to underreporting, including a blame culture, lack of resources for experience feedback and corporate “zero accident” targets. Underreporting means there is little learning from the “gift of failure” and hinders safety improvements, so a company with few reported incidents may, in fact, be less safe than one where incidents are reported and lessons are learned.

Non-fatal incidents and accidents provide opportunities for operational staff to understand how their system reacts in abnormal conditions, and provides a form of “training” in managing deviations and bringing the system back to control. They help prevent workers from becoming complacent about the risks in the workplace. (Naturally, it would be safer if this experience could be accumulated on a realistic simulator, during controlled training periods, but such training is expensive.)

These two possible explanations are non-contradictory.

Disputed findings on accident causality

Heinrich’s work was pioneering in analyzing the causal factors that led to workplace accidents, highlighting the associated costs (including hidden costs, that are often overlooked) and encouraging managers to think about and invest in prevention of occupational accidents (interrupting an accident sequence). It was an important contribution to changing practitioners’ all too common view of accidents as part of the “cost of doing business” and primarily resulting from the victim’s lack of care. However, some of his findings on accident causality were affected by biases.

One conclusion of Heinrich’s work is that 95% of workplace accidents are caused by “unsafe acts”. Heinrich came to this conclusion after reviewing thousands of accident reports completed by supervisors, and interviewing these supervisors as much as ten years after the relevant incidents. These supervisors are likely to often have blamed workers for causing accidents without conducting detailed investigations into the root causes, which would probably have revealed other causal factors such as unsafe machinery, management pressure to work quickly, and poor information on hazards. These other causal factors are the responsibility of managers, and it is well known that people have natural psychological tendencies to downplay their own contribution to negative outcomes and attribute them instead to other factors (in this case, the “sharp end” workers/victims).

Another disputed finding in Heinrich and Bird’s work concerns the causality of minor incidents and of major accidents. Heinrich stated that:

predominant causes of no-injury accidents are, in average cases, identical with the predominant causes of major injuries, and incidentally of minor injuries as well.

This is incorrect in high-hazard industry today, and can lead to

inappropriate allocation of resources. In particular, it leads some

companies to an excessive focus on “behavioural

safety”,“Behaviour-based safety” programmes consist of

observing workplace activities to identify deviations from

work-as-intended (deviations from procedures, work permits, rules on

personal protective equipment). Behaviour modification programmes such

as training, coaching, and employees cross-checking each other and

reporting what they perceive as being unsafe situations are then

implemented, with the intention of helping front-line workers

internalize hazard avoidance strategies and recognize the importance of

safety barriers (administrative controls). These programmes have been

fairly popular in high-hazard industry over the past two decades, but

are often criticized by researchers and HOF specialists: they tend to

focus attention on worker contribution to incidents and accidents and

personal health and safety issues, which inevitably draws attention away

from process safety issues and the prevention of major accident

hazards.

workplace housekeeping and the prevention of

low-consequence incidents such as slips and falls, to the detriment of

investment in maintenance and technical and organizational safety

improvements. Contrary to Heinrich’s assertion above, in industries

concerned by major accident hazards, there are significant differences

between major accidents and minor incidents: these differences include

the activities involved, the amounts of energy released, the

characteristics and the numbers of safety barriers that were or could

have been relevant to the event.

Accident causality is often more complicated than Heinrich’s quote suggests, as indicated by the following extract from the BP report into the Deepwater Horizon accident:

The team did not identify any single action or inaction that caused this incident. Rather, a complex and interlinked series of mechanical failures, human judgments, engineering design, operational implementation and team interfaces came together to allow the initiation and escalation of the accident.

Major accidents in high-hazard industries are generally caused by

some unlikely combination of circumstances that was not controlled due

to poor decision-making, management pressure for performance at the

expense of safety, poor communication, or unexpected interactions

between different components of complex systems.The famous report analyzing the Columbia space shuttle

accident includes this pithy statement in its conclusion: “complex

systems almost always fail in complex ways” [CAIB

2003].

These causal factors are very different from “unsafe

acts”, and their management requires specific actions by safety

specialists, system designers and system managers that is unrelated to

behavioural safety.

Aside

Heinrich’s coauthors felt it necessary to warn againstIt’s probably more correct to interpret this warning as

reflecting an improved expert/scientific understanding (50 years later)

of the causal factors underlying occupational incidents and accidents,

than as a way of fixing a gap between what was meant by the initial

author Heinrich and the way it was interpreted by readers of the early

editions of the book.

incorrect interpretation of his work, and stated in the

fifth edition of his book, published in 1980 after Heinrich’s death

([Heinrich, Petersen, and

Roos 1980], quoted in [Hale

2002]):

There has been much confusion about the original ratio in industrial accident prevention. It does not mean, as we have too often interpreted it to mean, that the causes of frequency are the same as the causes of severe injury. National figures show that different things cause severe injuries than the things that cause minor injuries. Statistics show that we have been only partially successful in reducing severity by attacking frequency.

In fact, the rate of minor events (measured by personal injury rates,

with indicators such as TRIR) is often mistakenly used as a proxy for

overall safety performance,On the weaknesses of indicators such as the total

recordable incident rate (TRIR) in giving a relevant and complete

picture of safety issues, see for example the report The

Statistical Invalidity of TRIR as a Measure of Safety Performance,

2020, Matthew Hallowell, Mike Quashne, Dr. Rico Salas, Dr. Matt Jones,

Brad MacLean, Ellen Quinn. Construction Safety Research Alliance

report.

and can lead top management to an incorrect view of the

safety of an activity. This occurred at BP’s refining activities and

contributed to the Texas

City accident in 2005. Indeed, as the 2007

Baker report on the explosion indicates:

BP mistakenly interpreted improving personal injury rates as an indication of acceptable process safety performance at its US refineries. BP’s reliance on this data, combined with an inadequate process safety understanding, created a false sense of confidence that BP was properly addressing process safety risks.

The same point is made concerning safety regulators in the investigation report of the Buncefield Major Incident Investigation Board (a major explosion on a petroleum storage depot in the UK in 2005), which notes (page 40):

Previous major incidents around the world such as Texas City, Longford (SE Australia) and Piper Alpha remind us that the task of controlling major hazard risks can become insidiously subverted by undue attention being paid to the less organisationally demanding issues of occupational safety.

Similarly, safety researcher Andrew Hopkins writes [Hopkins 2001] concerning the 1998 gas explosion at an Esso plant in Longford, Victoria, Australia:

Ironically Esso’s safety performance at the time, as measured by its Lost Time injury Frequency Rate, was enviable. The previous year, 1997, had passed without a single lost time injury and Esso Australia had won an industry award for this performance. It had completed five million work hours without a lost time injury to either an employee or contractor. […] LTI data are thus a measure of how well a company is managing the minor hazards which result in routine injuries; they tell us nothing about how well major hazards are being managed. Moreover, firms normally attend to what is being measured, at the expense of what is not. Thus a focus on LTIs can lead companies to become complacent about their management of major hazards. This is exactly what seems to have happened at Esso.

Disputed interpretations

The work of Heinrich and Bird, and the “safety pyramid” model, are widely used in safety training to justify a focus on behavioural safety (reducing the occurrence of unsafe acts, wearing individual protective equipment, following work procedures strictly, increasing attention to identify workplace hazards — what some trade union representatives call “blame the worker” safety programmes). It is a useful mental image that helps highlight that:

- major injuries are rare events

- more frequent, less serious events provide opportunities to improve safety in a proactive manner

The pyramid metaphorIt’s interesting to note that the pyramid metaphor is

often used in visual representations of simplistic and

excessively-hierarchical models, in a variety of areas including learning

methods, the “hierarchy” of needs/motivation theory attributed to

Maslow, and Bloom’s knowledge/skills/attitude taxonomy of educational

goals. Note that pyramids have important symbolic value in religions

such as Catholicism (representing the trinity) and social/moral

movements such as Freemasonry.

is sometimes combined with that of an

iceberg, where the visible part “above the waterline”

consists of reported injuries and fatalities, and the invisible part

under water are all the unreported incidents and near misses. This

mental image helps emphasize that there is potential for safety

improvement in incidents that do not always get registered in the

official reporting system, so it’s worthwhile trying to increase the

visibility of these events.

While this mental image is positive in helping prevent occupational

accidents (and is clearly very “sticky” in people’s minds…), it is often

misinterpreted in ways that reduce attention paid to major accident

hazards. One common misinterpretation is “frequency reduction will

trigger a severity reduction”. This is a “structuralist” view of

the Heinrich pyramid, a mistaken view or mythSafety researcher Andrew Hale refers to “beliefs which

seem so plausible that they command immediate acceptance”.

that “chipping away at the minor incidents forming the

base of the pyramid will necessarily prevent large accidents” [Hale 2002]. It assumes that there is a

common cause between minor incidents and major accidents (“what hurts

workers is also what kills them”), which is only partly true, as

discussed above. It suggests an intervention strategy that is fairly

easy to implement (if paternalistic): “focus people’s attention on

avoiding minor incidents (slips, trips and falls) and their increased

awareness of minor safety problems will prevent the occurrence of major

events”. This interpretation is inappropriate concerning process safety

and major accident hazards, which require specific focus, as mentioned

above.

Conclusion

We have seen that the descriptive validity of the Heinrich/Bird incident/accident pyramid is lower than many safety professionals believe. More importantly, its predictive validity concerning major accident hazards is very low, because the factors that cause low-severity incidents are typically quite different from the factors that cause high-severity accidents. It is time to put this safety myth to rest.

References

Barnett, Arnold, and Alexander Wang. 2000. Passenger mortality risk estimates provide perspectives about flight safety. Flight Safety Digest 19(4):1–12. https://flightsafety.org/fsd/fsd_apr00.pdf.

Bellamy, Linda J. 2015. Exploring the relationship between major hazard, fatal and non-fatal accidents through outcomes and causes. Safety Science 71:93–103. [Sci-Hub 🔑].

Bellamy, Linda J., Ben J. M. Ale, J. Y. Whiston, M. L. Mud, H. Baksteen, Andrew R. Hale, I. A. Papazoglou, A. Bloemhoff, M. Damen, and J. I. H. Oh. 2008. The software tool Storybuilder and the analysis of the horrible stories of occupational accidents. Safety Science 46(2):186–197. [Sci-Hub 🔑].

CAIB. 2003. Report of the Columbia accident investigation board. NASA. https://www.nasa.gov/remembering-columbia-sts-107/.

Gallivan, Steve, Katja Taxis, Bryony Dean Franklin, and Nick Barber. 2008. Is the principle of a stable Heinrich ratio a myth? Drug Safety 31(8):637–642. [Sci-Hub 🔑].

Hale, Andrew R. 2002. Conditions of occurrence of major and minor accidents: Urban myths, deviations and accident scenarios. Tijdschrift Voor Toegepaste Arbowetenschap 15(3). Delft. https://web.archive.org/web/20201021221057/https://www.arbeidshygiene.nl/-uploads/files/insite/2002-03-hale-full-paper-trf.pdf.

Heinrich, Herbert William. 1931. Industrial accident prevention: A scientific approach. New York. McGraw-Hill.

Heinrich, Herbert William, Daniel Petersen, and Nestor Roos. 1980. Industrial accident prevention: A safety management approach. 5th edition. New York. McGraw-Hill,

Hopkins, Andrew. 2001. Lessons from Esso’s gas plant explosion at Longford. In Proceedings of the First National Conference on Occupational Health & Safety Management Systems, edited by Warwick Pearse, Clare Gallagher, and Liz Bluff, 41–52.

Manuele, Fred A. 2002. Heinrich revisited: Truisms or myths. US National Safety Council,

Marshall, Pablo, Alejandro Hirmas, and Marcos Singer. 2018. Heinrich’s pyramid and occupational safety: A statistical validation methodology. Safety Science 101:180–189. [Sci-Hub 🔑].

Moore, Susan M., Patrick L. Yorio, Emily J. Haas, Jennifer L. Bell, and Lee A. Greenawald. 2020. Heinrich revisited: A new data-driven examination of the safety pyramid. Mining, Metallurgy & Exploration. [Sci-Hub 🔑].

Saloniemi, Antti, and Hanna E. Oksanen. 1998. Accidents and fatal accidents — some paradoxes. Safety Science 29(1):59–66. [Sci-Hub 🔑].

Yorio, Patrick L., and Susan M. Moore. 2018. Examining factors that influence the existence of Heinrich’s safety triangle using site‐specific H&S data from more than 25,000 establishments. Risk Analysis 38(4):839–852. [Sci-Hub 🔑].

Published:

Last updated: